As with most forms of tech these days, web scrapers have recently seen a surge of claims that they’re somehow based on AI or machine learning tech. While this suggests that an AI will detect exactly what you want extracted from a page, most scrapers are still rule-based (there are some exceptions, such as Diffbot’s Automatic Extraction APIs).

Why does this matter?

Historically rule-based extraction has been the norm. In rule-based extraction, you specify a set of rules for what you want pulled from a page. This is often an HTML element, CSS selector, or a regex pattern. Maybe you want the third bulleted item beneath every paragraph in a text, or all headers, or all links on a page; rule-based extraction can help with that.

Rule-based extraction works in many cases but there are definite downsides. Many of the sites most worth scraping change regularly or have dynamically created pages.

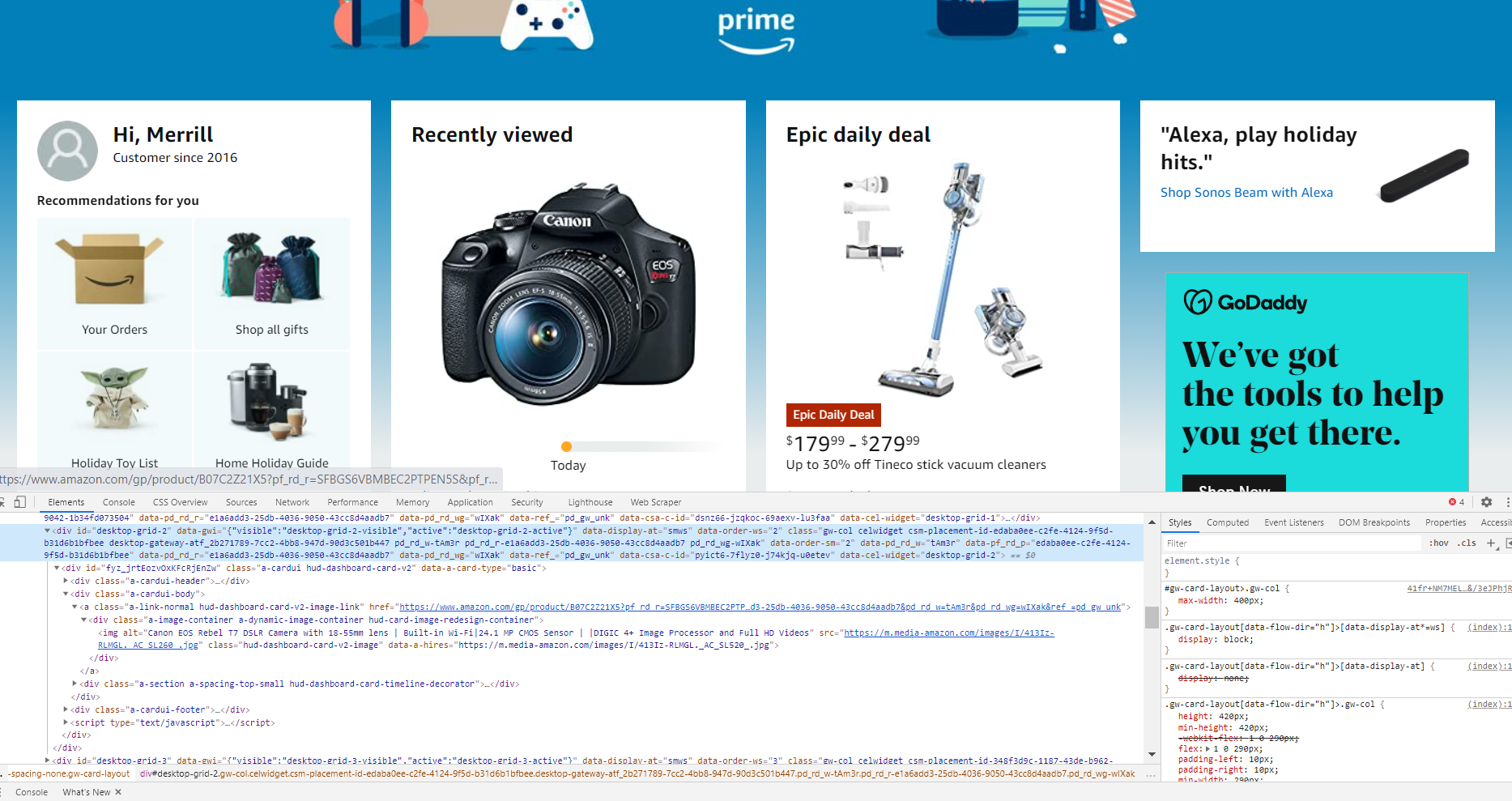

Large eCommerce sites and social networks even employ tactics to inhibit web scraping.

Ever inspected a large eCommerce site, refreshed the page, and noticed that the dynamically created class names for elements have changed? One of the longest Stackoverflow answers I’ve ever seen is one of a number providing techniques to inhibit scraping. Even though public web pages are fair game sans robots.txt instructions not to crawl, public web data is valuable and sites want you to access data through their channels.

A few other rule-based extraction pain points include:

- You likely need an entirely new set of rules for every page you wish to scrape.

- Every time a site changes (even if they aren’t doing so to obstruct scraping) your scraper will likely break.

- You’ll need to specify a rule for each data type you wish to extract (often many) for each page structure.

This means scraping from any collection of sites at scale is both resource-intensive and error-prone for rule-based extractors.

While I’m happy to see knowledge workers and data teams finding value in public web data, Autoscraper (a new web scraper that became the number one trending project on Github) falls into a similar pattern to the types of rule-based extractors I’ve discussed above.

In this case, Autoscraper is something new. When provided with a URL and sample data similar to the data you want to be returned, Autoscraper often delivers on its promise to “learn” what type of data you’re seeking based on an input of sample data. But it’s still a rule-based scraper. It’s essentially a wrapper around BeautifulSoup that allows you to select text by example (a native feature of Xpath). You can see the portion of its source code that translates a string into rules based on more traditional rule-based scrapers below. Or it’s in the source code at line 110.

While one step removed from user input, the above line translates user input into rules.

Don’t get me wrong, this is an improvement from many rule-based scrapers. But it’s not full-blown AI-based web scraping. For each page type on each domain you wish to extract from, you’ll still need to provide some sample data that mirrors the type and form of the data you want to be extracted. Even this input step can be error-prone, with your sample data not providing a strong enough signal to detect what is similar on the page to be extracted.

The power of truly AI-web-based web extraction is that the scraper still returns valuable data when it doesn’t know what type of page will be sent to it next.

There’s no scraper on earth that can handle every single page online. But not every page online has data that is useful to be scraped (or even a discernable intent). As one of three western organizations that have claimed to have crawled a vast majority of the web, Diffbot has broken down a series of page types that cover roughly 98% of the surface of the web. These include product pages, event pages, article pages, video pages, and so forth. Each type requires a slightly different scraper. Together they can handle pages of almost any type without knowing the specifics in advance.

These pages often have somewhat similar structures but not enough similarity to enable a rule-based extractor to work on even a small fraction. It would take a literal army of data teams to create rule-based scrapers for a majority of the eCommerce sites on the web. That’s where Diffbot’s Automatic Extraction APIs come in. Used as actual APIs or through a dashboard paired with Crawlbot (an automatic crawler that can run through an entire domain in minutes), Diffbot’s extraction APIs are trained with vast sets of corresponding pages. They look at both the underlying source of a page (similar to most Python scraping libraries) but also render the pages in a custom browser built for bots so that machine vision techniques can be used to pull valuable data. This leads to the ability to extract visual and non-visual fields of interest with 0 rules and at scale.

For example, below is a snippet of the response from Diffbot’s AI-enabled Product API pointed at a Manuka honey product from iherb.com. Without ever directly specifying what needed to be returned, a range of site-specific and general product fields (like shipping weight, availability, and SKU) were returned. And that’s just a snippet of the response!

Conclusion

For small-time scrapes or niche cases, there’s certainly a role for rule-based extractors. But there are hard limits to rule-based extraction. The level of human input and troubleshooting required to scale rule-based simply can’t keep up with the volume of dynamic content today. And if scraping is a central data pipeline for your org, you should carefully consider the pros and cons of relying on rule-based extraction before “jumping in.”

Resources:

- MIT Tech Review on Diffbot’s Web-Wide Scraping

- Autoscraper Github Repo

- First Gist Above (Autoscraper line 110)

- Second Gist Above (Snippet of Manuka Honey page extracted with rule-less extractor)

- Diffbot’s Automatic Extraction APIs