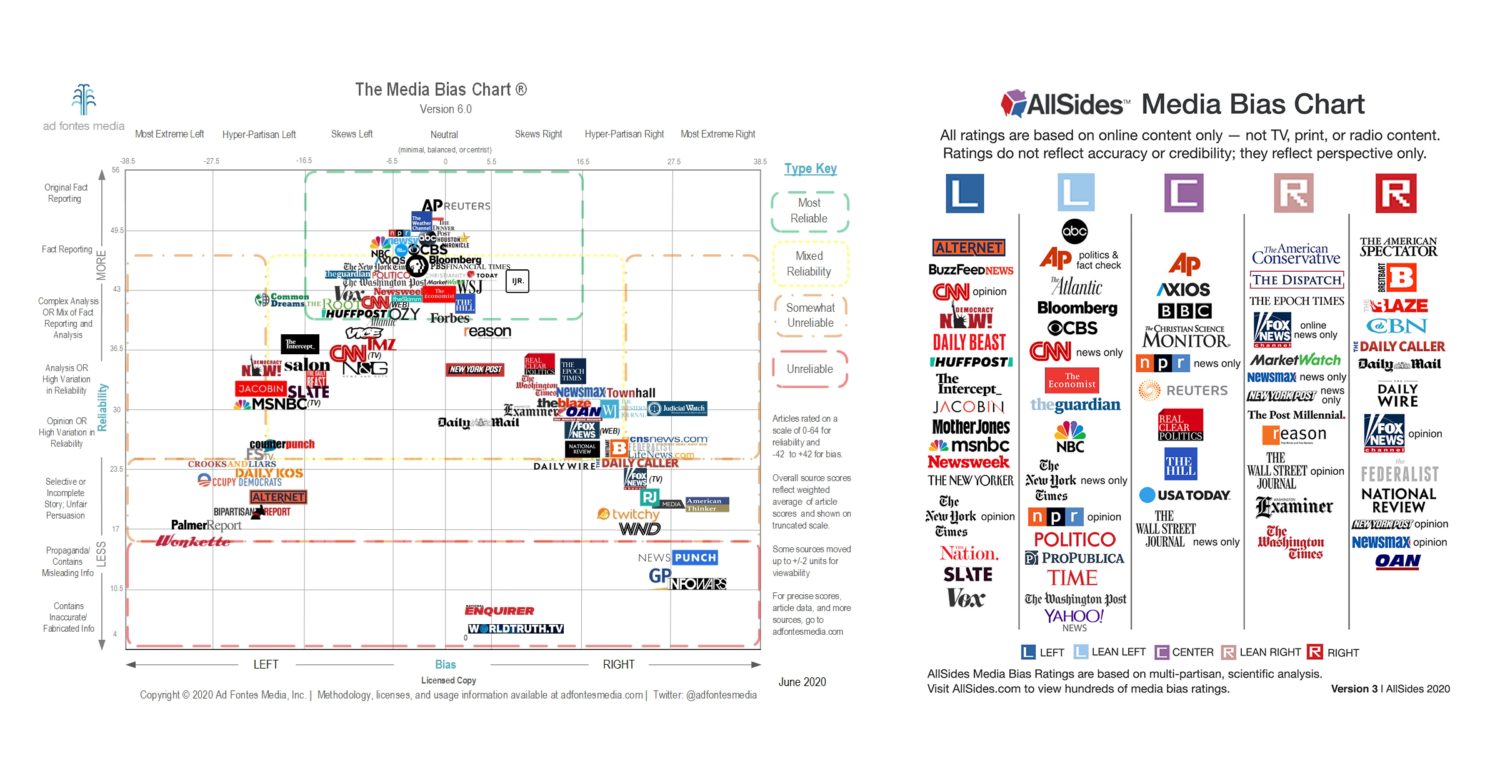

As it becomes increasingly difficult to separate what is real from what is virtual, it becomes increasingly important for us to have tools that measure the biases in the information that we consume everyday. Bias has always existed, but as we spend more of our conscious hours online, media — rather than direct experience — is what overwhelmingly shapes our worldviews. Various journalistic organizations and NGOs have studied media bias, producing charts like the following.

Source: Poynter Institute: Should you trust media bias charts?

Most of these methodologies rely on surveying panels of humans, which we know are incredibly biased. Both producers of these annual media bias studies methodologies can be summarized as the following:

The leading producer of media political bias charts that score the degree to which media outlets lean politically to the left vs. right notes about their methodology:

Keep in mind that this ratings system currently uses humans with subjective biases to rate things that are created by other humans with subjective biases and place them on an objective scale.

– Ad Fontes Media

How do we avoid our own biases (or the biases of a panel of humans) when studying bias? It is well known by now that AI systems (read: statistical models learned from data) trained on human-supplied labels reflect the biases of those human judgements encoded in the data. How do we avoid asking humans to judge the biases of the articles?

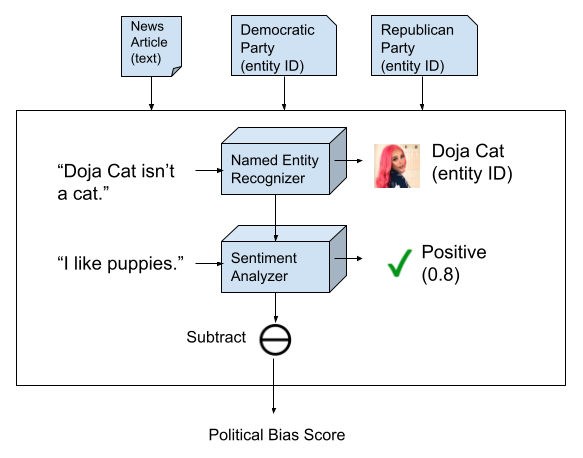

Answer: by building a system that (a) defines the target output with an objective statement and (b) combines independent AI components that are trained on tasks that are orthogonal to the bias scoring task. Here’s what a system we built at Diffbot to score political bias of media outlets looks like:

We can define via the input parameters, the desired output of the system as the sentiment towards the Republican Party (Diffbot entity ID: EQux7TYFDMgO6n_OByeSXzg) minus the sentiment towards the Democratic Party (Diffbot entity ID: EsAK1CigZMFeqk72s5EidGQ). These entities refer to the Republican and Democratic political parties in the United States. The beauty of this objective definition of system output is that you can modify the definition by varying the inputs to produce bias scores along any other political bias spectrum (e.g. Libertarian-Authoritarian, or the multi-party variations in your local country) and the system can produce new scores along that given those parameters without performing another bias-prone re-surveying of humans.

The two AI components of the system are a (a) named entity recognizer, and a (b) sentiment analyzer.

The named entity recognizer is trained to find subjects and objects in English and link them to Uniform Resource Identifiers (URIs) in the Diffbot Knowledge Graph. The entity recognizers know nothing of the political bias task and aren’t trained on examples of political/non-political text. What that model learns is the syntax of English, which positions in a sentence constitute a subject or object, and which entity a span of text refers to. The Republican Party and Democratic Party are just two unremarkable entities out of a possible billions of possible entities in the Diffbot Knowledge Graph that the NER system could link to.

The sentiment analyzer is a model that is trained to determine whether a piece of text is positive or negative, but it also knows nothing about political bias nor has it seen anything in its training set specific to political entities. This model is merely learning how we in general express negativity or positivity. For example, “I like puppies!” is a sentence that indicates the author has positive sentiment towards puppies. “I’m bearish on crypto” is a sentence that indicates the author has negative sentiment towards cryptocurrencies.

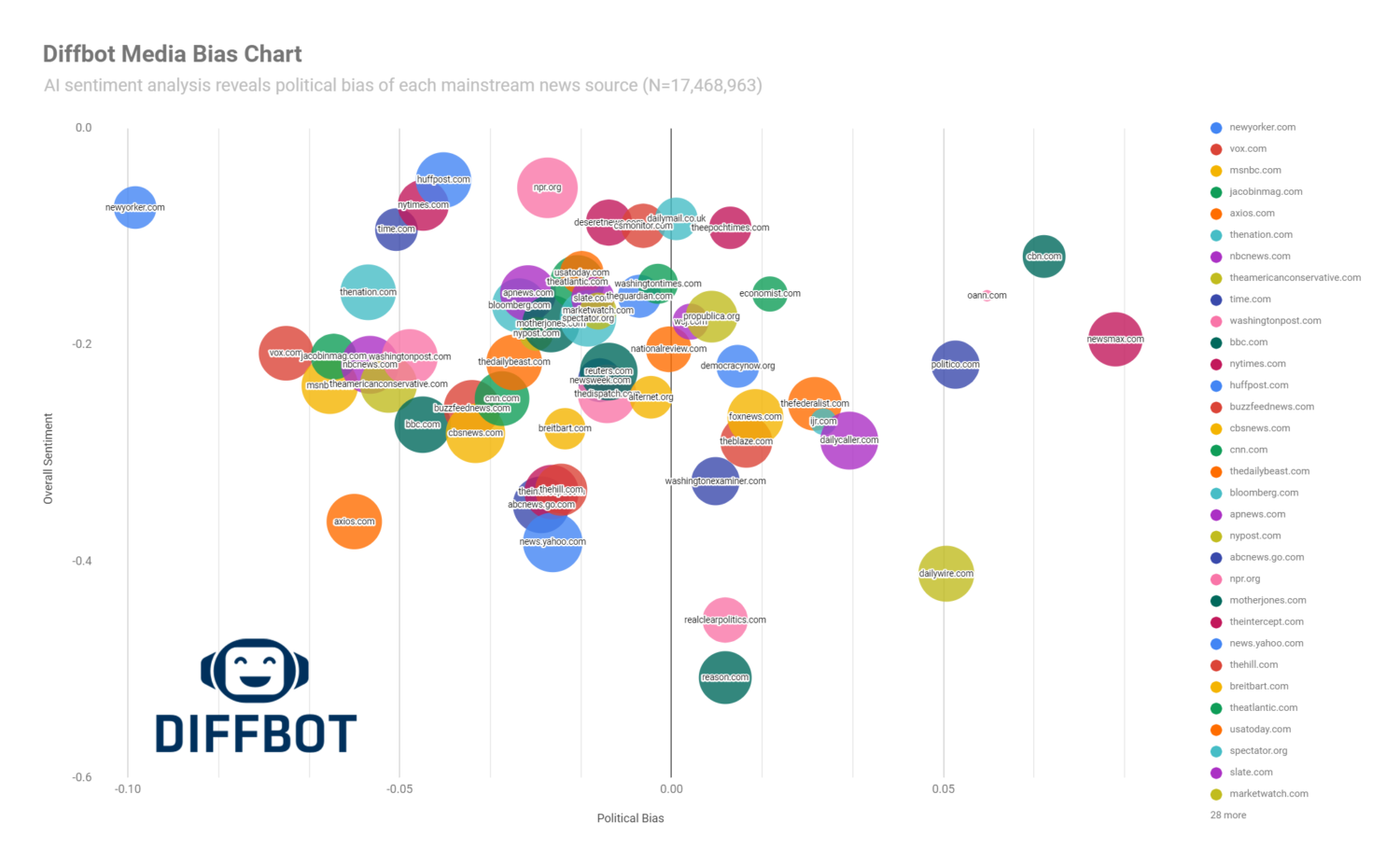

By combining these two independent systems, none of which has seen the political bias task or has training data that was gathered for that purpose, we can build a system that calculates the bias in text along a spectrum defined by any two entities. We ran an experiment by querying the Diffbot Knowledge Graph for content from the mainstream media outlets and ran the bias detector on the 17,468,963 resulting articles to produce the Diffbot Media Bias Chart, below.

There are some interesting insights:

- There’s an overall negativity bias to news. There’s truth to the old adage that the frontpage the newspaper reports on the worst things that’ve happened around the world that day. The news reports on heinous crimes, pandemics, disaster, and corruption. This overall negativity bias dominates any left-right political bias. However, there is also clearly a per-outlet bias that ranges from heavily critical (reason.com, realclearpolitics.com) to a subdued slight negativity (npr.org, huffpost.com).

- There is often a characterization of political bias among news outlet rivals that compete for your media attention and advertising dollars, e.g. the CNN/Fox News rivalry, but both are actually rather centrist relative to the other outlets. The data does not support a bi-modal distribution of political bias–that is, one cluster on the left and another cluster on the right, but rather something that looks more like a normal distribution–a large centrist cluster, with few outlets at the extremes. This may have to do with the fact that the business model of media ultimately competes for large audiences.

Of course, there is no perfectly unbiased methodology calculating a political bias score, but we hope that this approach spurs more research into developing new methods for how AI can help detect human biases. We showed that two AI components that solve orthogonal problems–named entity recognition and sentiment analysis–can be composed to build a single system whose goal isn’t to replicate human judgement, but do it better.

You can download the full dataset for the above experiment here and reproduce your own bias chart along any sentiment spectrum by using the Diffbot Natural Language API.

References

[1] https://www.poynter.org/fact-checking/media-literacy/2021/should-you-trust-media-bias-charts/

[2] https://adfontesmedia.com/how-ad-fontes-ranks-news-sources/

[3] https://www.allsides.com/media-bias/media-bias-rating-methods

You must be logged in to post a comment.